Enterprise solutions for AI are changing almost every part of our lives. As a result, governments are rapidly catching up and issuing guidelines, frameworks, policy and legislation to protect citizens and society. Just like GDPR before, business and public bodies will not have a choice – public demand for transparency and accountability will continue to grow as the influence of AI expands.

We are excited by the opportunity that AI promises. We are deploying AI software solutions across our services, bringing efficiency and insight to our clients’ digital solutions. Combining a sustained investment in our consultants’ skillset and introducing a sector leading Responsible AI approach, Hippo are well-placed to support organistions deploy AI in their workplace and services.

As responsible institutions, our clients require responsible AI.

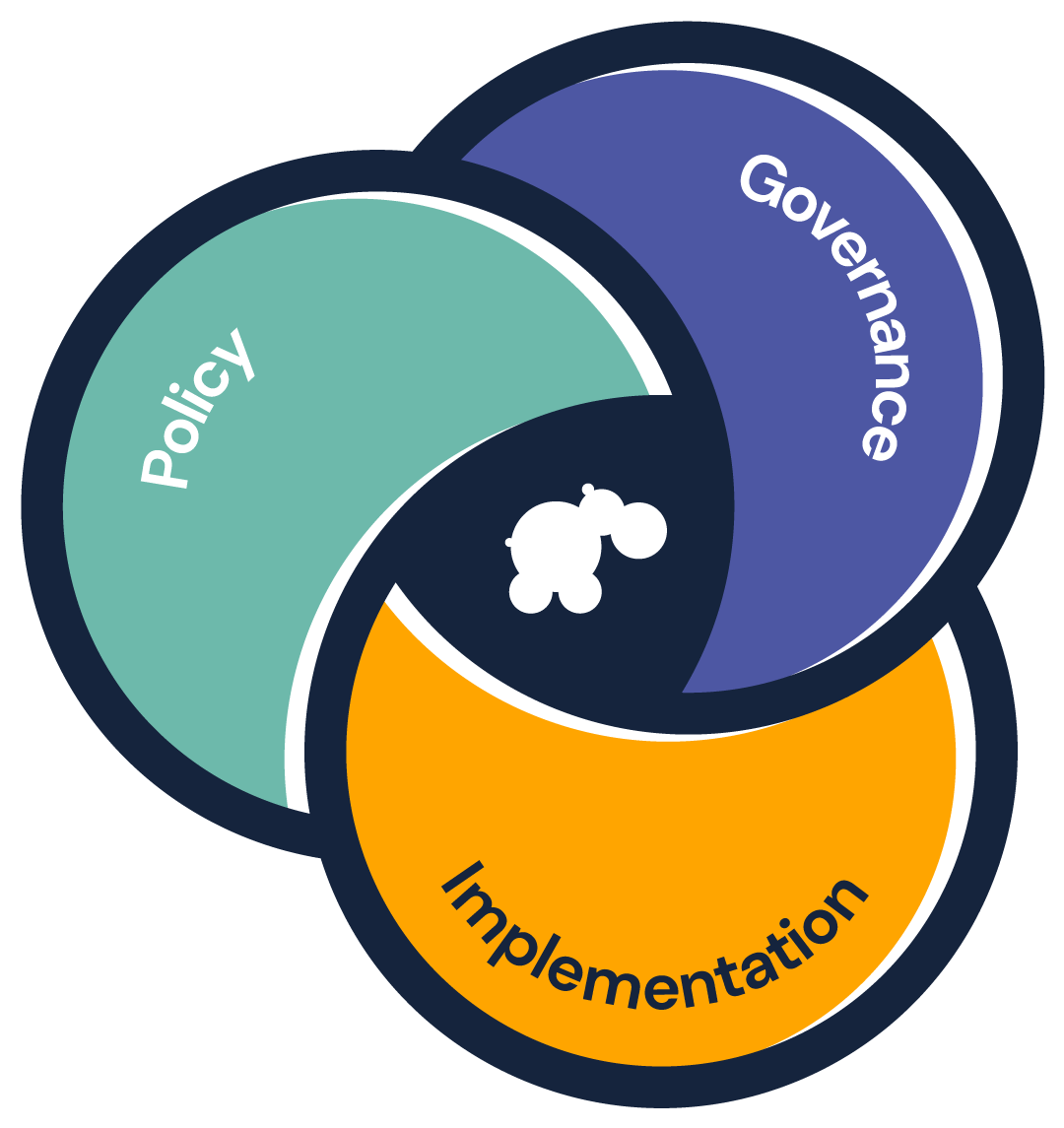

Our balanced approach, offering support in policy, governance and implementation, means that we are well-placed to support public sector and enterprise level organisations in the safe use of AI. Anything less would put public confidence at risk.

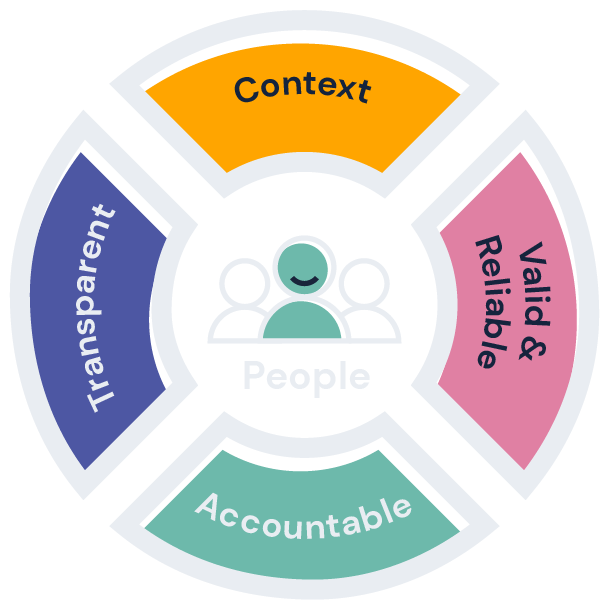

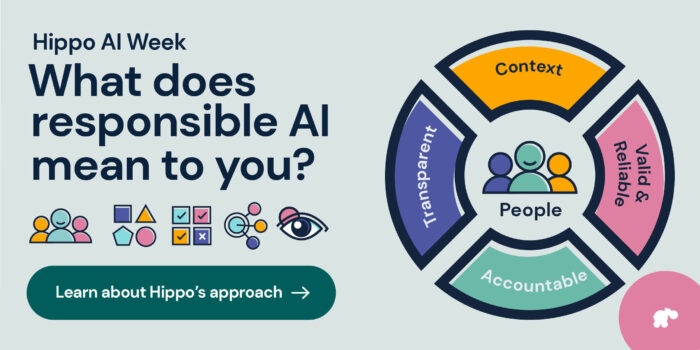

Our Responsible AI approach is based on five core dependencies. We place people purposely at the heart of our thinking. Responsible AI first looks at how we enable humans and reflect social norms. Around people, we design systems that respect context, are valid and reliable, have accountability baked in and maintain transparency.

The result is a strong foundation on which to build and deploy AI solutions at an enterprise or government level. These dependencies are not only simply a safety net or guidance framework, protecting the organisations themselves – as a source and proof point of trust, we view them as an investment in the value of the business or institution.

People

Artificial intelligence technologies should be focused in a way that prioritises human welfare and rights. This ensures AI systems are designed to align with societal values and human-centric principles. The goal is to create AI systems that augment and enhance human capabilities and decision-making, rather than replace or undermine them.

Context

The context borrows from the basic ethic elements values and norms and any AI application may have to match differing values and norms from diverse groups. The application should handle the diverse groups equally, but at the same time recognise this may need to be a compromise in situations of conflicting values.

Valid & reliable

Validity & reliability for deployed AI systems are a key trust factor and are often assessed by ongoing testing or monitoring that confirms a system is performing as intended. AI systems should be designed to maximise positive outcomes and minimise negative impacts and may require human intervention in cases where the AI system cannot detect or correct errors.

Accountable

When people have to make decisions for someone else, they usually weigh-up the pros and cons based on what they think is best for that person. They consider the potential good outcomes and the possible bad outcomes, trying to choose the option that minimises harm and maximises benefits. Once made, they own the decision and are answerable for the outcomes, good or bad. A well designed AI application is no different.

Transparent

AI should be easy to detect and understood by people. People in general do not trust things that cannot be explained or understood. Our interaction with AI is increasing on a daily basis. As our usage increases, so too is the use in decisions made about us. For an AI system to gain trust people must have a level of understanding and knowledge, appropriately explained.

Our promise

We will help our clients deploy AI in a responsible manner that benefits the organisation, the team we work alongside and the users they serve.

~ Darren Hutton, Chief Digital Officer

We can help

We’re a digital services partner who is genuinely invested in helping our clients thrive as modern organisations. Our delivery methodology is truly agile, from concept to reality, supporting innovation and continuous improvement to achieve your desired outcomes.

Discover

Responsible AI: an overview

The pressure to implement AI cannot be at the cost of finding a valuable use case and it needs to consider wider implications, risks and challenges. AI needs to be introduced in a responsible manner.

Responsible AI and core values

At Hippo we utilise artificial intelligence throughout our services, embedding these solutions only where it is appropriate and beneficial. Importantly, we do so in a way that is consistent with our core values.